Message of the Holy Father for the 60th World Communications Day 2026

Protect human voices and faces

Dear Brothers and Sisters,

The face and voice are unique features that distinguish each person — they show their unique identity and are a constitutive element of each meeting. The ancients knew this well. Thus, to define the human person, the ancient Greeks used the word “face” (prósopon), which etymologically indicates what is in front of the gaze, the place of presence and relationship. The Latin term persona (from per-sonare), on the other hand, includes sound — not just any sound, but the unique voice of a specific person.

The face and voice are sacred. They were given to us by God, who created us in his image and likeness, calling us to life with the word that he himself addressed to us; a word that first resounded through the centuries in the voices of the prophets and then in the fullness of time became flesh. This Word, the communication that God communicates about himself, we have also been able to hear and see directly (cf. 1 John 1:1-3), because it has been made known in the voice and Face of Jesus, the Son of God.

From the moment of creation, God wanted man as his interlocutor and, as St. Gregory of Nyssa says, he imprinted on his face the reflection of God’s love, so that he could fully live his humanity through love. Guarding human faces and voices, therefore, means guarding this seal, this indelible reflection of God’s love. We are not a species composed of predefined biochemical algorithms. Each of us has an irreplaceable and unique vocation that results from life and manifests itself precisely in communication with others.

If we don’t follow this principle, digital technology can radically change some of the fundamental pillars of human civilization that we sometimes take for granted. By simulating human voices and faces, wisdom and knowledge, awareness and responsibility, empathy and friendship, the systems known as artificial intelligence not only interfere with information ecosystems, but also enter the deepest level of communication, which is that of human relationships.

This challenge is therefore not technological, but anthropological. Protecting our faces and voices ultimately means protecting ourselves. Embracing the opportunities offered by digital technology and artificial intelligence with courage, determination and discernment does not mean hiding from us the tipping points, ambiguities and threats.

Do not give up your own thinking

There has long been a lot of evidence that algorithms designed to maximize social media enggement — which is profitable for platforms — reward quick emotions while penalizing more time-consuming manifestations of human activity, such as the effort to understand and reflect. By locking groups of people in bubbles of easy consensus and easy outrage, these algorithms weaken the ability to listen and think critically and increase social polarization.

On top of that, there is a naïve, uncritical trust in artificial intelligence as an omniscient “friend,” the source of all knowledge, the archive of all memories, the “oracle” that gives all advice. All this can further weaken our ability to think analytically and creatively, to understand meanings, to distinguish between syntax and semantics.

While AI can provide support and assistance in managing communication tasks, avoiding the effort of our own thinking and settling for artificial statistics can weaken our cognitive, emotional, and communication abilities in the long run.

In recent years, AI systems have taken more and more control over the production of texts, music, and videos. In this way, a significant part of the human creative industry is threatened with liquidation and replacement with the Powered by AI label, turning individuals into passive consumers of ill-conceived ideas, anonymous products, devoid of authorship and love. On the other hand, the masterpieces of human genius in the fields of music, art and literature are reduced to the role of a mere training ground for machines.

The question that is close to our hearts, however, is not what a machine can or will be able to do, but what we can and will be able to do, growing in humanity and knowledge, through the wise use of such powerful tools at our disposal. Man has always been tempted to appropriate the fruits of knowledge without effort, commitment, search and personal responsibility. To give up the creative process and to give machines our own mental functions and imagination, however, means burying the talents we have received in order to grow as persons in relationship with God and other people. This means hiding our face and lowering our voice.

To Be or to Pretend to Be: A Simulation of Relationships and Reality

When we browse our feeds, it becomes increasingly difficult for us to understand whether we are interacting with other people or with bots or virtual influencers. The opaque activities of these automated agents influence public debates and the choices made by individuals. Chatbots, in particular, based on large language models (LLMs), prove to be surprisingly effective in covert persuasion by continuously optimizing personalized interaction. The dialogical, adaptive and mimetic structure of these language models is able to mimic human feelings and thus simulate a relationship. This anthropomorphization, which can even be funny, is also deceptive, especially for the most vulnerable. Because chatbots — made excessively “affected,” as well as always present and available — can become hidden architects of our emotional states and thus violate and occupy the sphere of intimacy of people.

Technology that exploits our need for relationships can not only have painful consequences for the fate of individuals, but it can also harm the social, cultural, and political fabric of societies. This happens when we replace relationships with others with relationships with artificial intelligence that has been trained to catalog our thoughts and then build a world of mirrors around us, in which every thing is created “in our image and likeness.” In this way, we allow ourselves to be robbed of the possibility of meeting another human being, who is always different from us and with whom we can and must learn to confront. Without the acceptance of difference, there can be neither relationships nor friendship.

Another great challenge posed by these new systems is the phenomenon of bias, which leads to the acquisition and transmission of a falsified perception of reality. AI models are shaped by the worldview of the people who create them, and they can, in turn, impose ways of thinking, replicating stereotypes and biases present in the data from which they draw. Lack of transparency in algorithm design, coupled with inadequate social representation of data, leaves us trapped in networks that manipulate our thoughts and perpetuate and exacerbate existing social inequalities and injustices.

The risk is huge. The power of simulation is so great that artificial intelligence can deceive us by creating parallel “realities” and appropriating our faces and our voices. We are immersed in multidimensionality, in which it is increasingly difficult to distinguish reality from fiction.

On top of that, there is the problem of lack of precision. Systems that give statistical probability as knowledge actually offer us at most approximate information, which is sometimes even real “hallucinations.” The lack of verification of sources, coupled with the crisis of field journalism, which requires constant gathering and verification of information in the places where events take place, can create even more fertile ground for disinformation, causing growing feelings of mistrust, confusion and uncertainty.

A Possible Covenant

Behind this huge, invisible force that engulfs us all, there are only a handful of companies whose founders have recently been introduced as the creators of the “Person of the Year 2025,” i.e., artificial intelligence architects. This raises serious concerns about oligopolistic control over algorithmic systems and artificial intelligence, which are able to subtly direct behavior and even rewrite the history of humanity — including the history of the Church — often in such a way that we are not able to really realize it.

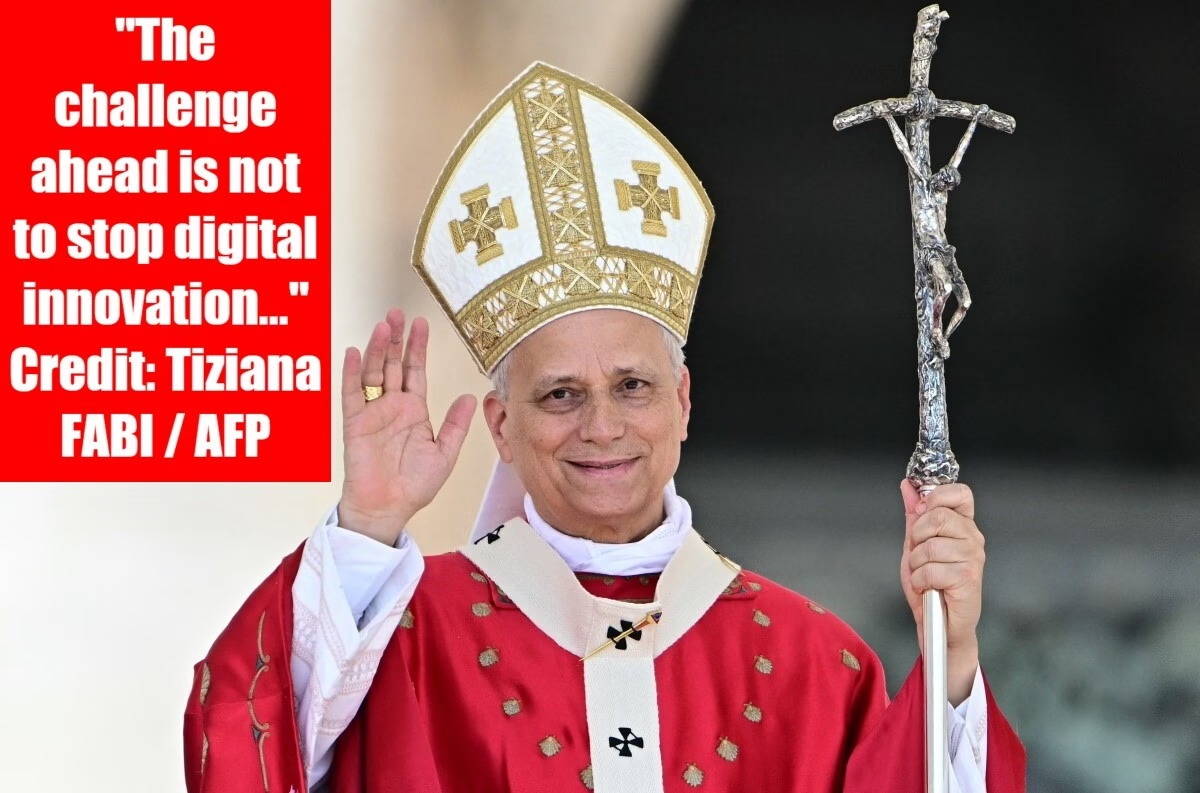

The challenge ahead is not to stop digital innovation, but to steer it, being aware of its ambivalent nature. Each of us should speak up in defense of individuals, so that these tools can be truly integrated by us as allies.

This alliance is possible, but it must be based on three pillars: responsibility, cooperation and education.

First of all, responsibility. Depending on the function performed, it can take various forms, such as honesty, transparency, courage, foresight, the obligation to share knowledge, the right to information. In general, no one can shirk their responsibility for the future we are building.

For those at the forefront of online platforms, this means making sure that their business strategies are not only driven by the criterion of maximizing profits, but also by a far-sighted vision that takes into account the common good – just as each of them cares about the well-being of their own children.

AI model developers and developers are required to be transparent and socially accountable with regard to the design principles and moderation systems underlying their algorithms and the models they develop, so as to foster informed consent by users.

The same responsibility also rests with national legislators and regulators, whose task it is to ensure that human dignity is respected. Appropriate regulation can protect individuals from emotional attachment to chatbots and limit the spread of false, manipulative or misleading content while preserving the integrity of information while keeping in mind the misleading simulation.

Media and communications companies, on the other hand, cannot allow algorithms, aimed at winning the fight for a few seconds of extra attention at all costs, to prevail over fidelity to their professional values, which are aimed at seeking the truth. Public trust is earned through accuracy and transparency, not through the pursuit of any interest. AI-generated or manipulated content should be clearly labeled and distinguished from human-created content. It is necessary to protect the authorship and sovereign ownership rights of the works of journalists and other content creators. Information is a public good. A constructive and meaningful public service is not based on ambiguity, but on transparency of sources, the involvement of stakeholders and a high standard of quality.

We are all called upon to cooperate. No sector can tackle the challenge of leading digital innovation and managing AI on its own. Therefore, it is necessary to create security mechanisms. All stakeholders — from the tech industry to policymakers, from creative companies to academia, from artists to journalists and educators — must be involved in building and making an informed and responsible digital citizenship a reality.

This is what education aims to do: to increase our personal capacity for critical thinking, to assess the credibility of the sources and possible interests behind the selection of information that reaches us, to understand the psychological mechanisms that trigger it, and to enable our families, communities and associations to develop practical criteria for a healthier and more responsible culture of communication.

Therefore, it is becoming increasingly urgent to introduce literacy skills in the field of media, information and artificial intelligence into education systems at all levels, which is already promoted by some civilian institutions. As Catholics, we can and must make our contribution so that people, especially young people, acquire critical thinking skills and grow in freedom of spirit. This literacy should also be integrated into wider lifelong learning initiatives, also involving older people and marginalized members of society, who often feel excluded and powerless in the face of rapid technological change.

Literacy in the media, information and artificial intelligence will help everyone not to succumb to the anthropomorphizing drift of these systems, but to treat them as tools, always use external verification of sources — which may be inaccurate or erroneous — provided by artificial intelligence systems, protect their privacy and their own data, knowing the security parameters and the possibilities of raising objections. It is important to educate, educating yourself and others in the conscious use of artificial intelligence, and in this context, protect your image (photos and audio recordings), your own face and voice to prevent them from being used to create harmful content and behaviors, such as digital fraud, cyberbullying, deepfakes, that violate the privacy and intimacy of people without their consent. Just as the Industrial Revolution required basic literacy to enable people to respond to novelties, the digital revolution requires digital literacy (along with humanistic and cultural formation) to understand how algorithms shape our perception of reality, how artificial intelligence biases work, what mechanisms determine the appearance of certain content in our information streams (feed) and what the assumptions and economic models of the AI-based economy are — and how they can change.

We need the face and voice to re-signify the person. We must safeguard the gift of communication as the deepest human truth towards which every technological innovation must be directed.

In proposing these reflections, I thank all those who work to achieve the goals outlined here, and I wholeheartedly bless all those who work for the common good through the means of communication.

From the Vatican, 24 January 2026, in memory of St. Francis de Sales.

LEO PP. XIV

Editor’s Note: The 2026 World Communications Day will be celebrated on 17 May 2026. This English translation from Polish provided by the Vatican website was culled from the National Catholic Register – https://www.ncregister.com/commentaries/012426-communications-day-message-from-pope-leo.